Urban Fragments

Tokyo, October 4th 2025

Jonathan Russell

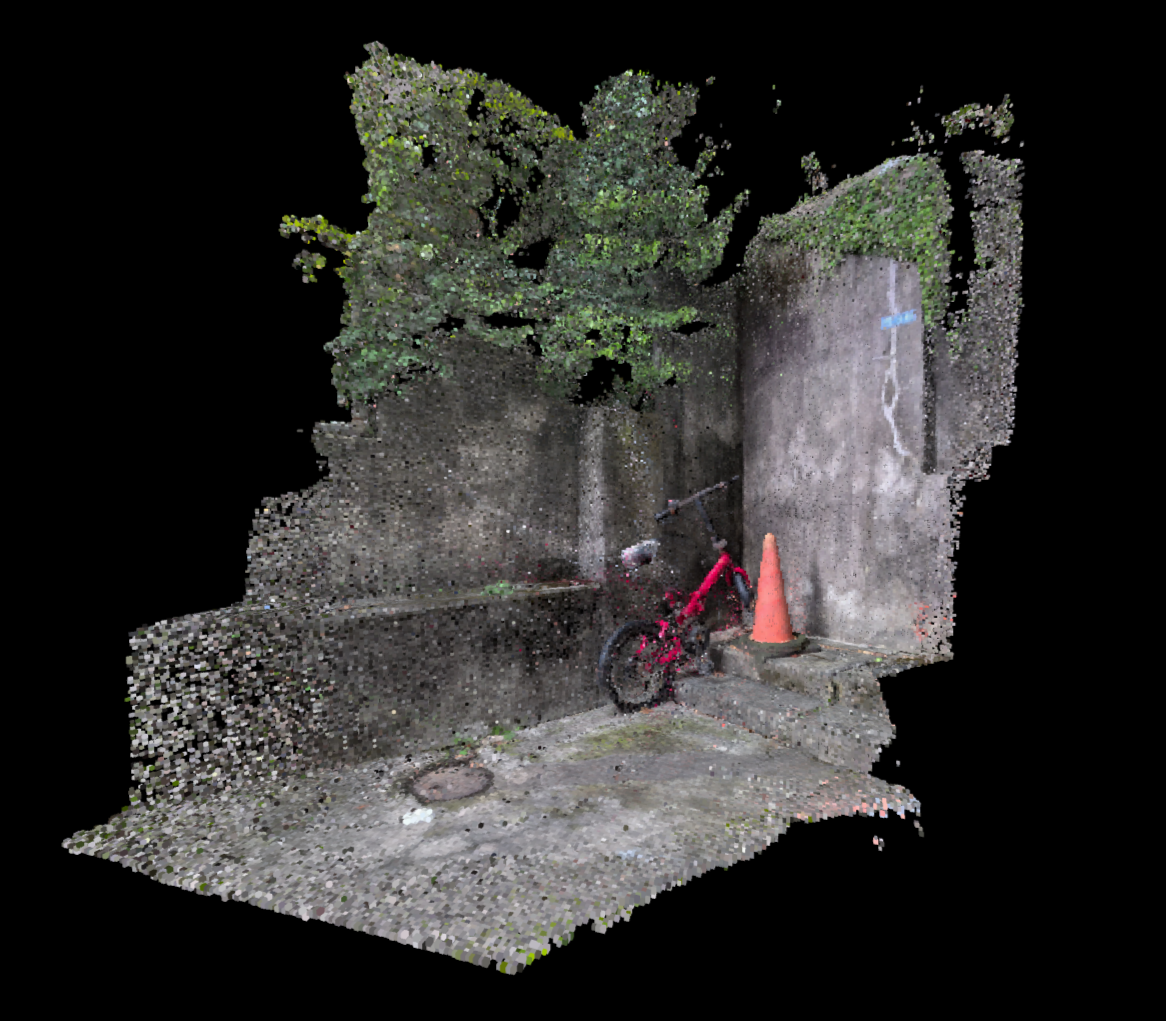

On October 4th in a quiet Tokyo backstreet, a child’s bike and a fading orange traffic cone prop against a cracked-concrete retaining wall grown over with vines. The sound of chirruping birds is joined by the cresting white static of approaching rain.

On October 17th, an alleyway in Seoul’s fading printworks district echoes with the rhythmic hum and clatter of room-sized machines churning through advertising flyers. The blind corner of two narrow passages is cluttered with signage and sundry pipework to support the work continuing inside.

On October 26th in Chongqing, the wall behind a souvenir store in a former banknote factory hosts a serpentine tangle of electrical cables and junction boxes. Warm yellow light and the sound of tourists spill out through a small rear window.

These are three of 35 Fragments that make up the Urban Fragments Project (fragment.jrau.info). Created in late 2025, the project is a custom workflow for capturing, processing and sharing atmospheric 3D snapshots of small urban spaces, and was tested over 5 weeks of daily updates across Japan, China and Korea. The project speculates on the as-yet unrealised potential of photogrammetry to create quick, impressionistic recordings of the phenomenology of ordinary urban spaces. These Fragments, captured in less than 2 minutes, are rapid 3D scans that seek to evoke the atmosphere of a place without requiring any specialised equipment. The project is also a speculation on the possibility of platform-agnostic spatial capture and sharing and the possibilities of LLM-assisted coding to enable highly specialised custom workflows for artists and non-professional coders.

Seoul, October 15th 2025

Jonathan Russell

Since the early 20th century, amateur photographers have adopted technological advancements in photography to more evocatively capture their experience of a place.

In 1900, Kodak’s Brownie cameras put snapshot photography in reach for millions. In the subsequent decades, advancements in camera technology, standardisation of film and the popularisation of colour photography dramatically improved the accessibility and fidelity of photography for non-professionals. By the 21st century digital photography, smartphone photography, and later smartphone videography made it increasingly easy to capture rich records of ordinary places. At the same time, social media exploded the horizon for sharing these records. Piece-by-piece it became possible for amateurs to capture the phenomenology of a place and share it widely - think of the ubiquitous Instagram panorama from some far-flung white sand beach, or the 4K stereo ASMR walking tour on Youtube that invites us to a virtual first-hand experience of a rainy night in Tokyo. Still, these evocative online experiences are limited by the boundaries of linear video - the obvious next step in the phenomenological fidelity of place capture is towards interactive, three dimensional recreations of real-world spaces.

The end-state of this pathway was first sketched out in science fiction many years ago - Mike Pondsmith’s Cyberpunk Braindances are a well-known example, an immersive medium for recording and sharing full-spectrum sensory experiences. As goes sci-fi, so goes Silicon Valley - today, Meta, Epic Games and countless start-ups have together invested billions in capturing and recreating real-world spaces in 3D. We are not yet ready to Braindance, however - the cutting edge of spatial capture is a terrain of walled gardens, narrowly defined use-cases and specialised, expensive equipment. Surveying this status-quo of 3D capture and viewing provides some insight into its limitations.

The most phenomenologically complete recreations of real-world 3D spaces today are found in video games. Epic Games, flush with Fortnite cash, owns all the elements of a capture and representation pipeline, from photogrammetric scanning (Realityscan) through sharing and selling 3D scanned objects (Megascans and Sketchfab), through their Unreal game engine and distribution via the Epic Games store. Games created using Epic’s workflow can achieve extraordinary fidelity and immersion, but Epic’s business is mostly geared towards the creation of new virtual spaces, not the accurate replication of existing real-world spaces. As such, the company does not have a single continuous pipeline from scan to game engine: Epic’s workflows are designed for game makers who, even when capturing from the real world, expect the final space to be extensively manipulated and re-authored along the way.

Another common use case for 3D capture is in selling houses. Matterport, owned by real estate technology company CoStar, offers an end-to-end solution for scanning, hosting and sharing the company’s proprietary ‘digital twins’. These virtual spaces, now almost ubiquitous in residential real estate listings, seamlessly meld rough 3D scans with a series of panoramic photos to create a virtual house tour. Matterport’s virtual duplicates are easy to share and easy to navigate, but slow and expensive to capture - scanning is via Matterport’s proprietary software, typically using the company’s specialised LIDAR-based scanners, and hosting scans requires a Matterport subscription. The resulting virtual space is often highly detailed but essentially static, with the user hopping between a series of connected static panoramas rather than interactively exploring a 3D space.

Less common are the still-experimental workflows, often designed for VR, that use gaussian splat techniques to represent 3D spaces that approach photorealistic detail. Meta’s Reality Labs is a leader in this area - their Hyperscape program is perhaps the most advanced all-in-one workflow for scanning and recreating real-world spaces. The key innovation of this system, however, is also its primary limitation - spaces are captured by the cameras in the company’s Quest 3 headsets, processed on Meta’s servers and fed back to the user exclusively in VR. Meta’s virtual reality walled garden strictly limits the creative potential of these spaces - if this is the future of spatial capture, that future will be circumscribed by the financial interests and imperatives of Meta.

Chongqing, October 15th 2025

Jonathan Russell

The Urban Fragments Project, then, is not at the cutting edge of spatial capture technology - rather, it is an alternative to the status quo, a custom-tuned workflow with custom criteria. LLM-assisted coding opens up new possibilities in this area - artists and researchers with limited coding experience can create personalised workflows that allow for prototyping and rapid iteration that would once have been beyond reach. For the Fragments project, the outcome is an end-to-end process that allows for the capture, processing and sharing of small spaces - alleyways, walls, tiny corners of a city - on a daily basis with a minimum of effort. Four key criteria guided the development of the workflow towards its final form: phenomenological richness, ease of capture, ease of processing and ease of viewing.

The first criteria, phenomenological richness, dictated the form of the final scan: a recognisable but impressionistic 3D reconstruction of the real space that can be viewed in real-time, accompanied by ambient audio captured simultaneously. Where many 3D scan workflows produce a textured and triangulated 3D mesh, Fragments are instead made up of around 250,000 individually-coloured voxels with a subtle pulsing effect. This somewhat-abstracted representation gives the space a sense of solidity and helps to smooth over imprecision or inaccuracies in the scan.

The second criteria, ease of capture, dictated a workflow fed solely by hand-held smartphone video, without specialised cameras, LIDAR scanners or stabilisation hardware. Capture of a small space (10-15 cubic metres) takes 2 minutes or less. Unlike Meta’s Hyperscapes or Matterport’s digital twins, the Fragments are intended as snapshot-like travel photos - taken on a whim, without preplanning. Reducing the friction of capture is key to enabling non-professional users to capture and share their experience of a space.

Ease of processing required that all intermediate steps between capturing and viewing a space are completely hand-off - as simple to use as sharing an Instagram video. In practice, the scan video is uploaded to a Google Drive folder automatically synchronised to a remote laptop running the processing software. This software grabs the video, extracts its geolocation and ambient audio, then divides the video into around 1000 equally-spaced still frames. These images are fed to a local instance of Meshroom, a photogrammetry software package that uses structure-from-motion algorithms to build a coloured 3D pointcloud. The workflow feeds this pointcloud back into a python script that converts points to voxels and creates an HTML page to display the voxels, ambient audio and location information using the A-Frame framework and Three.Js library. The workflow then updates a custom map page with the new scan’s location and uploads the scan and updated map to a website through Amazon AWS. The process takes around 4 hours, but requires no further input from the scanner - this allows for daily updates to the project while travelling.

The final key criteria for the Fragments workflow is ease of viewing. A snapshot-like recreation of a place is only useful if it can be easily and widely shared. Meta’s Hyperscapes, for all their realism, can only be viewed on the company’s latest VR Headsets within the confines of the company’s own viewing app. Fragments, by contrast, are platform-agnostic and require no specialised software beyond a web browser - the site is also adapted for either mobile or desktop viewing for maximum accessibility.

Within the boundaries of the above criteria, the Urban Fragment Project imagines a future of snapshot-like 3D capture of the phenomenology of small urban spaces. Outside the walled garden status-quo of Meta or Matterport, the technologies and techniques already exist to capture the experience of all kinds of intimate, overlooked ‘ordinary’ places that deserve to be recorded and remembered - the Fragments Project is a provocation towards that possible future.

The Urban Fragments project can be explored at fragment.jrau.info.